AI regulation talk — the rules are that there are no rules

The dust is yet to settle after the India AI Impact Summit, but the UN process for the Global Dialogue on AI Governance is already upon us. Since generative AI found its iPhone moment in 2022, governments have been entrapped in the proverbial ‘Red Queen’ effect, running just to stay in the same place. The breakneck pace of AI innovation has resulted in global-scale anxiety.

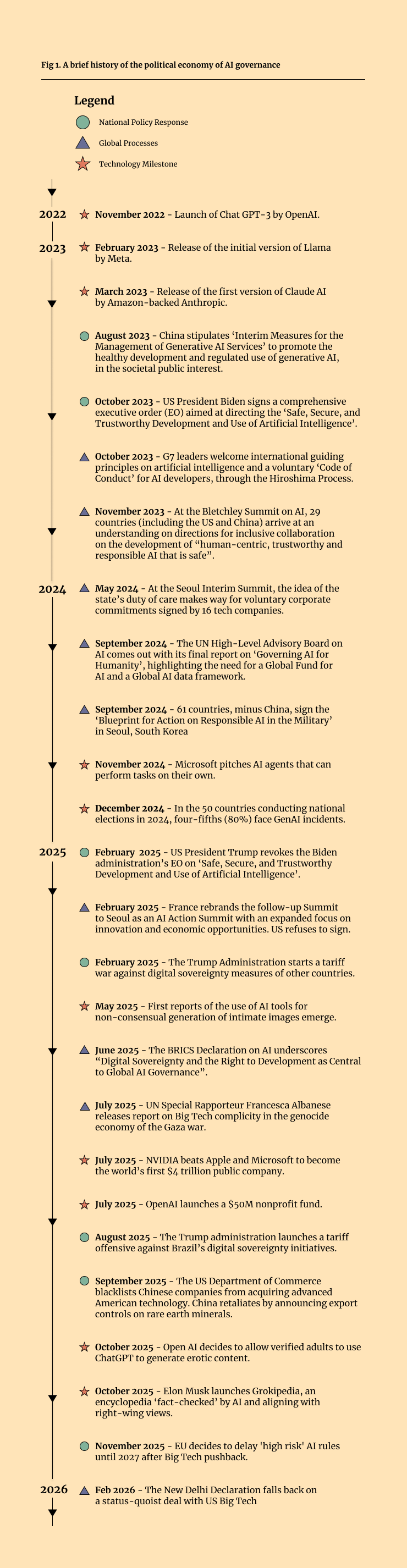

The chatter on regulation is deafening. In just the four years from 2022 to 2026, the world has witnessed annual AI Summits (from Bletchley to Delhi), high-profile plurilateral initiatives (the G-7’s Hiroshima AI Process in 2023, the OECD’s voluntary reporting framework on AI risk management in 2025, and the BRICS Declaration on AI in 2025), and the establishment of the Global Dialogue by the UN in August 2025.

Silicon Valley Big Tech has more than taken the cue — dropping any pretence of caring about ethics, normalizing recklessness.

Yet, beyond the din of declarations lies the stark reality of countries scaling down AI regulation in 2025 — a palpable expediency that is dangerous for democracy worldwide. Strategic economic and military interests under the Trump administration have skewed AI deployment on the international stage, resulting in a rollback of some Biden-era safety rules. Silicon Valley Big Tech has more than taken the cue — dropping any pretence of caring about ethics, normalizing recklessness. Corporate lobbying in the EU has led to a dilution of the EU AI Act, as permissive innovation and simplified compliance have become stand-ins for politico-economic sovereignty. Southern players like India have steered clear of a single comprehensive law, inviting industry to go forth and innovate within the comfort of voluntary standards. Meanwhile, China’s ‘controlled’ innovation approach speaks to an aggressive drive for AI integration in domestic manufacturing, education, and health, with internal political impulses guiding the market.

Governance calls for intentionality; it needs pause, taking stock, examining competing considerations, and going back to constitutional values, public interest, and democratic visions of the future to make the decisions of the day. This short history of the AI governance landscape seems to suggest otherwise. In this essay, we reflect on the shifting interests and self-serving politics that have kept the needle on the AI governance compass deflected, preventing global alignment on our collective moral futures. We argue that the Global Dialogue on AI Governance must move the needle towards a geopolitics of universal solidarity and wellbeing, anchored in mutual responsibility and shared purpose.

Summit-speak on safety — why outcomes have tiptoed around the known knowns

AI regulation rhetoric often veers in favor of the unbounded opportunity from AI, where addressing risks is treated as subsidiary. This optimistic slant is not an accident. It is carefully cultivated by a small group of ‘edge-lord’ libertarians, who cast the harms of the AI paradigm as aberrations, things to tinker with for humanity to be delivered by the transhuman miracles of AI. The AI gospel is sold by its omnipotent purveyors as capable of transcending biological limitations, eliminating suffering, disease, and even averting death. In this manufactured reality, the real materialities of AI-induced harm are naturalized as irrelevant.

The central agenda of the first global AI Summit in Bletchley (November 2023) was to develop shared consensus “on the opportunities and risks of AI”, and “the need for collaborative action on frontier AI safety.” Twenty-nine countries (including the United States and China) were able to arrive at an initial understanding on the directions for international collaboration to develop “human-centric, trustworthy and responsible AI that is safe.” Participating countries committed broadly to the development of appropriate state-led evaluation and safety research, and participating companies expressed support for the next iteration of their models to undergo appropriate independent evaluation and testing. It was agreed that “governments should apply the principle that models must be proven to be safe before they are deployed (a ‘precautionary’ approach), with a presumption that they are otherwise dangerous.”

But the trajectory of the debate was about to change. The year 2024 witnessed a new belligerence in Silicon Valley. The novelty of ChatGPT was fading, and Big Tech shifted to monetizing AI. Companies like NVIDIA surpassed cloud giants in market value due to demand for AI infrastructure, while Google, Microsoft, Meta, and Amazon were integrating AI into their products. The GenAI arms race was intensifying, with a proliferation of strategic partnerships, cross-sectoral collaborations, and targeted solutions. AI was shifting from simple conversational bots to agentic AI, capable of completing multi-step tasks with minimal human oversight.

Safety, for the countries at the table, was now a focus on malicious AI risks (such as capabilities to assist the development and use of chemical or biological weapons) and an agreement to work on risk thresholds for severe AI risks.

For the big players outcompeting each other in the GenAI market, the accuracy of their models was a key business concern to ensure their products showed up without false positives. Unlike 2023’s rushed AI adoption, early 2024 saw key leaders in the industry prioritizing AI data accuracy, governance, and security to combat hallucinations and bias. Companies were also adopting a “Zero Trust” architecture to combat AI-powered cyberattacks. Resilient infrastructure was a vital ingredient for revenue and market performance.

However, the safety debate had shrunk. At the Interim AI Summit held in Seoul in May 2024, with GenAI and its unfolding capabilities, the Global South, in particular, was more than cognizant that innovation and inclusivity needed to be on the agenda for Paris. Safety, for the countries at the table, was now a focus on malicious AI risks (such as capabilities to assist the development and use of chemical or biological weapons) and an agreement to work on risk thresholds for severe AI risks. The idea of the state’s duty of care with respect to safe AI for the people did not reappear in subsequent Summits.

In Seoul, sixteen technology companies, including prominent ones in the GenAI space – Meta, Anthropic, OpenAI, xAI, and the Chinese company, Zhipu AI – volunteered to publish safety frameworks for measuring risk, develop thresholds for determining ‘intolerable” risk, and promised not to deploy models that exceed such particular thresholds. These were empty promises that did not see the light of day. Influential blocs such as the OECD piggybacked on the voluntary approach, even as the perils of AI technologies were becoming evident across the globe. For instance, by the end of 2024, in the fifty countries conducting national elections during that year, four-fifths (80%) were left facing GenAI incidents.

The EU AI Act, which entered into force in August 2024 to regulate AI based on risk levels, was a silver lining, no doubt – banning unacceptable risks like social scoring, mandating strict rules for high-risk AI, and stipulating transparency obligations for general-purpose AI models. However, the US Presidential Elections that put President Trump back in office created a new normal. As discussed before, a changing geopolitical reality eventually saw the European AI law amended to favor European ‘competitiveness’ – drawing scrutiny from the European Data Protection Board (EDPB) and European Data Protection Supervisor (EDPS) regarding potential risks to fundamental rights and data protection standards.

In the case of the new administration, a deregulated tech industry minus all the safety frills was easy opportunism.

Almost immediately after assuming office in February 2025, President Trump revoked the Biden administration’s Executive Order on Safe, Secure, and Trustworthy Development and Use of Artificial Intelligence, which had sought to establish guardrails for AI. For US trade and foreign policy, the tech bros and their wares were always critical ammunition. In the case of the new administration, a deregulated tech industry minus all the safety frills was easy opportunism. At the Paris AI Summit in the same month, US Vice President J.D.Vance railed against excessive AI regulation that could cripple innovation. The Paris Summit’s final statement merely ‘noted’ the voluntary commitments on AI safety (a statement that ultimately the US did not sign).

A new epoch of no-holds-barred AI was upon us. In July 2025, UN Special Rapporteur Francesca Albanese demonstrated the complicity of a number of Big Tech companies in the economy of genocide in Gaza, a list that included signatories of the Frontier AI Safety Commitments. In October 2025, even as OpenAI announced its decision to allow verified adults to use ChatGPT to generate erotic content, Elon Musk launched Grokipedia, an encyclopedia ‘fact-checked’ by AI, aligning with right-wing views. For the tech barons, it was now better to be sorry than safe. December 2025 saw Grok AI, Musk’s chatbot, integrated into the social media platform X. The tool was marketed as a less-restricted, ‘edgier’ alternative to other AI models, allowing users to create nonconsensual sexually explicit images/deepfakes — extreme irresponsibility masquerading as innovation.

The 2026 India AI Summit was geared to ruffle no feathers, showcase India’s AI solutions, and use the occasion for prestige diplomacy. Despite mounting evidence on the inefficacy of the voluntary safety commitments approach, the India edition did not change course. The final Summit Declaration from Delhi, endorsed by over 90 countries (including both the US and China), continues to place faith in “industry-led voluntary measures.”

As things stand, AI governance is at a precipitous point, with the absence of political will to rein in the tech corporations. From mines to microchips, drudgery to data, genetic material to GenAI, synthetic data to missile strikes — we are witnesses to global AI value chains that brazenly disregard human dignity, social peace, and ecological well-being. The unprecedented geoeconomic power of the tech titans, fuelled by the US oligarchy, and the race to keep up with the innovation economy have eliminated considerations of global democratic accountability in AI governance. On the road to the Global Dialogue in July 2026, the conversation is badly in need of a moral compass that recognizes the limits and dangers of AI solutionism and the need for global AI rules that acknowledge the wrongs of the current paradigm. The future to be secured is for humanity, not corporate profiteering.

AI for the Global South — is catch-up even possible?

The UN Global Digital Compact (GDC) – adopted by the UN in September 2024 – provided a space to acknowledge the challenges for the Global South in the current digital order. It brought to the table the aspirations of the majority world for digital sovereignty. The ‘Governing AI for Humanity’ report, also released in the same month, articulated “the creation of a global fund for AI to put a floor under the AI divide” as a critical priority. The UN GDC underscored this agenda through an explicit commitment to building “capacities, especially in developing countries, to access, develop, use and govern artificial intelligence systems and direct them towards the pursuit of sustainable development.”

With the US elections in early 2025, and an international system dented by a trust deficit, development and humanitarian assistance suffered a huge setback. The idea of digital sovereignty descended to its most watered-down version, leaving developing countries, each to their own, to navigate a daunting economic playing field with few options. In late 2024, China had already abstained from accepting a global non-binding pact banning AI from controlling nuclear weapons at the ‘Responsible Artificial Intelligence in the Military Domain’ conference. China maintained that specific AI rules could violate its sovereignty and reduce accountability in conflict. The Biden administration at that point was introducing a range of measures intended to slow China’s development of an indigenous semiconductor ecosystem and advanced AI capabilities.

The AI Action Summit in Paris (Feb 2025), co-chaired by France and India, also issued a call “to narrow the inequalities and assist developing countries in AI capacity building” and re-center the role of the UN in shaping global AI governance. Even though the US backed out, some commentators have seen this narrative shift as a ‘win’ for bringing back the development agenda front and center of global AI governance discussions.

Just a few days after the Paris Summit in Feb 2025, the Trump administration officially initiated a formal policy of tariff war against digital sovereignty assertions by other countries, targeting any regulation imposed on US digital service companies’ operations by foreign governments. This not only overturned the acceptable norms and legitimate purposes for tariff measures, including national security, support for developing industries, etc., but also converted trade policy into a blatant instrument for furthering Silicon Valley’s imperial ambitions.

A few months later, the BRICS bloc came together to send a strong message. The BRICS Leaders’ ‘Statement on the Global Governance of AI’, adopted in Brazil in July 2025, explicitly intended to “support a constructive debate towards a more balanced approach” in fostering “responsible development, deployment, and use of AI technologies for sustainable development and inclusive growth.” The Statement upheld digital sovereignty and the right to development as central to global AI governance. It is no coincidence that just a month later, the Trump administration launched a tariff offensive against Brazil, specifically targeting the country’s efforts to regulate US social media platforms operating in the country and the participation of US firms in its digital public infrastructure for payment services (Pix) — a direct attack against Brazil’s attempt to exercise its digital sovereignty. In September 2025, the US Department of Commerce blacklisted Chinese companies from acquiring advanced American technology; China retaliated by announcing export controls on the rare earth minerals essential for the US semiconductor industry. This interdependence in the tech supply chain and market pressure forced both countries to come to a fragile de-escalation in Busan, in Jan 2026, through a temporary truce. In the second half of 2025, India also came under the line of attack, at one point being threatened by the US government with 50% tariffs and facing tremendous pressure from Washington to sign away its digital sovereignty rights for a trade deal with the US – including abandoning rights to impose digital service taxes, regulate cross-border data flows, and institute data localization measures.

A rejection of the extractive colonial apparatus that Big Tech-controlled infrastructures represent is a collective responsibility of all stakeholders.

The India AI Summit was an opportunity to respond with moral courage to this impasse. It could have orchestrated a strong coalition of countries promoting a solidarity-based global AI governance architecture attentive to Southern concerns. A principled consensus with strategic gains could have been forged among a group of nation-states committed to digital democracy and digital sovereignty. But this was not to be. In a world where essential infrastructures of connection remain in the hands of one country and its corporations, the headwinds are adverse, no doubt. Also, no hosting government wants a diplomatic failure. The New Delhi Declaration, unfortunately, fell back on a status-quoist deal for India with US Big Tech, reminiscent of the French Summit in 2025, which had resulted in 100 billion USD flowing into the host country’s AI ecosystem as private investment. The final governance script in New Delhi was pretty predictable – a voluntary and non-binding framework for AI.

The writing on the wall is clear – it is not possible for the majority world to play catch-up. Around two-thirds of existing data centers are located in the United States, China, or Europe. Africa alone possesses less than 1% of the world’s data center capacity. In some nations, the cost of a single GPU can represent 60–75% of GDP per capita, making it virtually unaffordable. Massive, structured, high-quality data collection and consistent energy supplies are beyond the current capacities of most developing nations.

Today, as the discourse shifts towards alternative models of AI, concrete pathways for international cooperation – grounded in a binding global agreement – become vital. A rejection of the extractive colonial apparatus that Big Tech-controlled infrastructures represent is a collective responsibility of all stakeholders. As development finance commitments are reneged by the wealthiest of nations, and public finance avenues shrink, corporate philanthropy is raising its head as the saviour. Last July, OpenAI launched a $50M nonprofit fund. Major AI companies, from Microsoft and Nvidia to IBM, Amazon, and Meta, have followed with funding, tools, and infrastructure. Google has recently announced a grant for government AI innovation, with support and mentorship. Anthropic candidly explained why investing in social good makes business sense: “It serves our broader work in AI development. The lessons we learn deploying AI to organizations serving vulnerable populations — lessons about privacy, trust, and responsible use — inform how we approach deployment everywhere,” they admitted. The democratic deficit in global AI governance has proven to be disastrous – the South has ended up as the field-testing environment, opening the floodgates for its data, labor, and natural resources to be transferred into the coffers of Big Tech.

In the enmeshed world that AI is coshaping, mutuality and reciprocity in global governance are non-negotiable. AI self-determination for nations and peoples from the Global South is critical. Those excluded and rendered irrelevant by the current AI paradigm should not have to run twice as fast. They just need to get to another place from here on their own terms.

Fig 1. A brief history of the political economy of AI governance

Concluding thoughts: A global dialogue with a conscience

Dominant countries and big tech corporations have profiteered heavily from a ‘finders keepers’ digital economy. As the site of extraction for this unsustainable digital paradigm, the Global South has seen egregious harms — impoverishment of the data commons, hollowing out of productive local resources, and brutal exploitation of labor. The history of AI governance also points to the limits of corporate volunteerism in fixing an AI economy that is broken. The time is now to recognize and reject this irrefutable reality.

The norms and rules that hold a dialogic space create and shape its outcomes. The Global Dialogue on AI Governance must be intentionally designed to galvanize a genuine, non-instrumental communicative rationality; an open, honest, and unconstrained conversation. It must architect the conditions for a critical mass of actors – governments, civil society, academia, and the technical community – to rally around the imperative and plausibility of another AI paradigm.

The arc of humanity is predicated on whether international cooperation can embrace the following without delay:

- i) a precautionary approach to AI innovation — with clear public and corporate accountability safeguards grounded in international human rights law, and

- ii) a concrete mechanism to support peoples and countries of the Global South — to realize their vision/ another imagination of AI-assisted futures.

As we have argued elsewhere, this calls for “common but differentiated responsibilities”— a shared understanding to build humane AI futures in which everyone has a place, and for which the powerful and the plunderers must bear a heavier burden.